Explaining Intel’s QuickPath Technology

By Jawad Masood | February 24th, 2009FSB (front-side-bus) was not enough to meet the future requirements of computing with ever increasing cores, processors, multithreaded optimizations and memory technologies. In year 2008, Intel introduced Quick-Path-Architecture to replace FSB with high bandwidth and low latency. Intel also provided point-to-point interconnects between all components and some other architectural changes to make system more efficient and reliable, to meet the future requirements of next generation Intel based computing platforms. Also every processor has its own cache in QPA (Quick Path Architecture).

Following are some major changes/improvements made in Intel QPA compared to other competitive architectures:

- Point-to-Point connections known as Intel QuickPath Interconnects

- Snoop Technology

- Cache Forwarding

- Payload efficiency

- Integrated Memory Controller

Quick-Path Interconnects:

Every component in the architecture is connected with one another with high speed two-way interconnections. In this environment, data can simultaneously move between any or all connections, thus providing high degree of parallelism along with decrease in latency (time delay).

Snoop Technology:

According to this new snoop technology, if a processor 1 wants a data that resides in processor 4, it simultaneously sends request to all processors. Processor 2 and 3 tells the version of copy, of data they have, to processor 4, and then processor 4 delivers the data to processor 1. This technology reduces the time to fetch the data.

In other competitive architectures which do not use snoop technology, if processor 1 needs a data that resides in processor 4 memory, it sends request only to processor 4. Processor 4 then checks the other remaining processors (2 and 3) for a newer instance (copy) of the required data. After checking processor 2 and 3, processor 4 sends the data to processor 1, this increases time required to complete the transaction.

Cache Forwarding:

This feature enables the processor to transfer data between their caches. If process 1 needs the data from processor 4’s cache and processor 3 has the copy of that data, then processor 3 will send that data to processor 1 in response to the snoop it received and processor 4 will counter check that data in next cycle. This results in high speed and efficient data transfer between the processors.

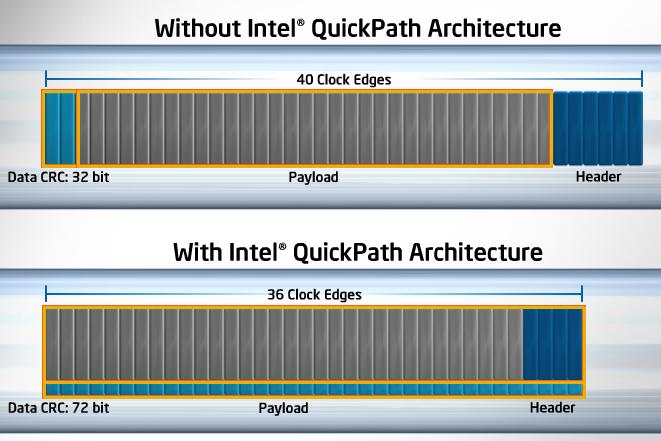

Payload efficiency:

In simple words, this technology speeds up the transfer of data packets. It uses only 36 clocks to transfer a data packet as compared to 40 clocks of older architecture. The new architecture also sends 72 CRC bits for error detection in parallel with the packet’s payload Vs 32 bits of older architectures (in which error detection bits were send in-line, i.e. after packet, unlike the new architecture).

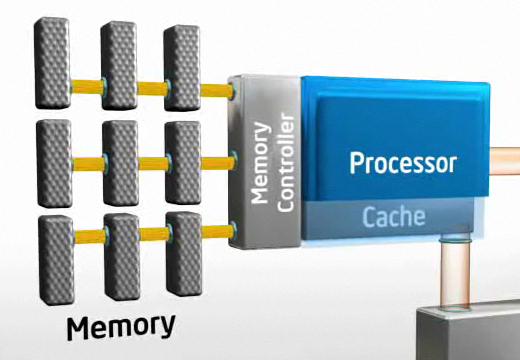

Integrated Memory Controller:

Preferably, the data required by application should reside in the cache of the processor that is running that application, however, mostly that’s not the case. As cache cannot be made larger and larger due to other limiting factors, Intel has introduced new technique i.e. to integrate memory controller with each processor; to speed up the data movement to application, no matter where the data resides.

Case 1: DATA REQUIRED BY PROCESSOR IS LOCATED IN ITS OWN CACHE

This is the ideal case, if the data is present in the attached cache, it greatly reduces the latency (time delay). That is why Intel is increasing the size and the number of caches in the system.

Case 2: DATA REQUIRED BY PROCESSOR IS LOCATED IN ANY OTHER PROCESSOR

In the new architecture, Intel has made Quick Path Interconnects to cop with this situation, providing high speed data transfers between the processors.

Case 3: DATA REQUIRED BY PROCESSOR IS LOCATED IN SYSTEM MEMORY

For this case, Intel has provided Integrated Memory Controller with each processor. Each controller has 3 paths to the memory whose frequency is twice the frequency of DDR-2 667 memory, hence total bandwidth increased up to 3x.

There are many other features in the new architecture that include self healing capabilities, reliability, servicability, different modes of data transfer, clock error detection and healing etc but let’s leave them for the interested reader’s further research!